Container-Based Workloads on AWS and Other Clouds

Load balancing is necessary for applications, especially when you start to decouple your applications and move into the world of microservices-based architectures. Tools like Docker, Kubernetes and Swarm help provide some of the essentials for moving in this direction, but you can get stuck on how you balance your workloads. Kubernetes is an open source system developed by Google for running and managing containerized microservices-based applications in a cluster and the same goes for Docker’s Swarm.

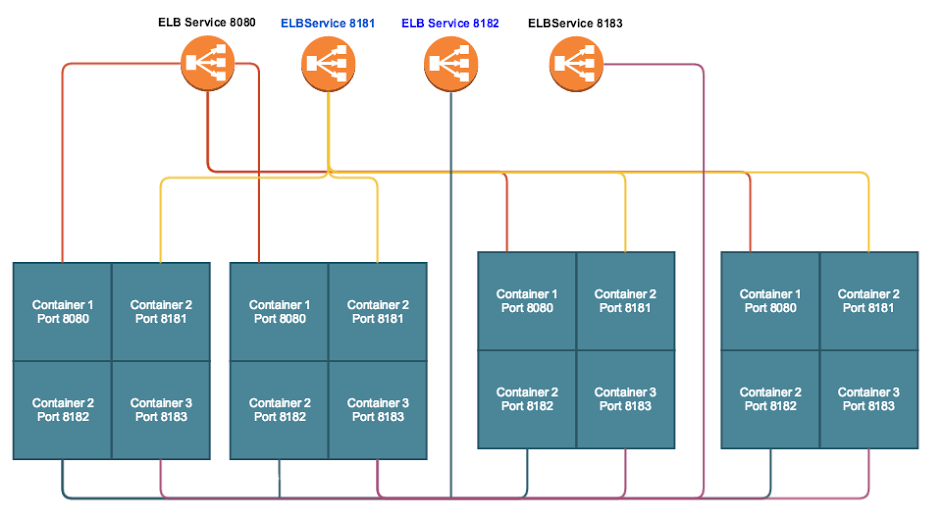

Although Kubernetes and Swarm do provide some basic built-in solutions for exposing these services, you still have to configure AWS’s ELB to support it. One common pitfall that we see in this space is developers and engineers configuring up to 100 to 150 ELBs just to support basic container-based workloads due to the limited feature set in ELB. ELB is great for VM balancing, but when you dive into containers, the same logic no longer applies. The workloads have transformed, and de-coupling applications into services allows for faster product release times while providing a much more stable platform.

Container-Based Workloads Without A10 Lightning ADC

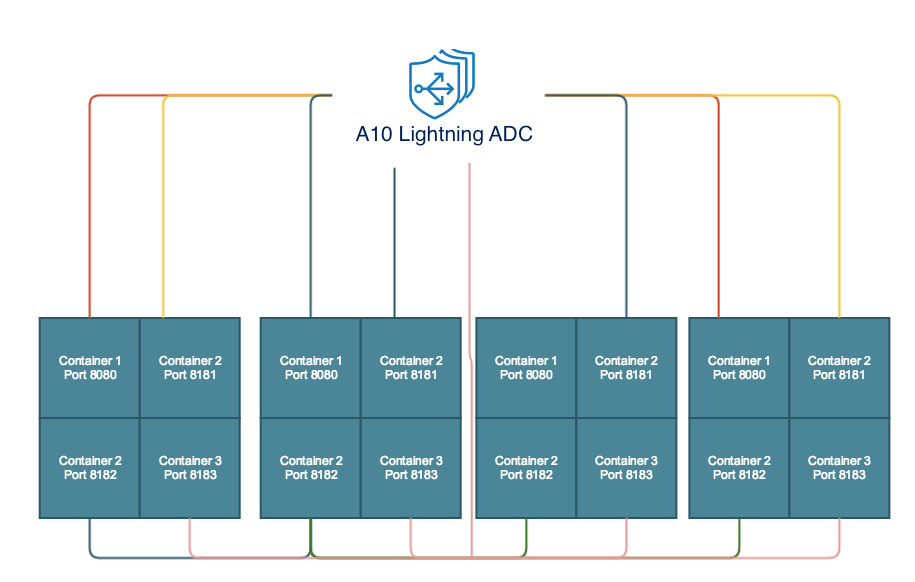

Container-Based Workloads With A10 Lightning ADC

Now the alternative way to accomplish this in AWS without having to launch ELBs for every port in your container can be as simple as starting a pair of EC2 instances and loading up tools like NGINX and HAProxy. However these do not account for things like traffic surges, distributed denial of service (DDoS) attacks or high availability load balancing for which they need to be stitched with additional tools. If you search the AWS Marketplace for a tool that works with container-based workloads you’ll be stumped to find anything that is turnkey and runs out of the box. Well, that is where A10 Lightning technology shines: it was developed and engineered in the cloud and with container architectures in mind.

You could take a container-based application or service, front-end it with A10 Lightning Application Delivery Service (ADS) and have it up and running in less than 10 minutes. By default, it includes the ability to scale up and down, along with complex Layer 4 load balancing and Layer 7 load balancing, security and analytics. It is a homerun for a DevOps team. This allows them to keep the machine moving forward versus stopping for every bump in the road. The cost savings of this platform compared to multiple ELBs and point solutions from NGINX and HAProxy is a great benefit. When moving forward in container-based technology, you should work with a solution that preserves the best practices of VM-based approaches and allows easy integration and roll out of new application architectures.

Seeing is believing.

Schedule a live demo today.