74% of security professionals are increasing their spend on DDoS defenses, but will it be enough to combat the growing width, sophistication and intensity of today’s attacks? Confidence continues to wane on how doing more with legacy DDoS defenses will meet the challenge.

Join a panel of experts from A10 and Ixia for a timely webinar on rethinking your DDoS defenses approach to. Learn why weaponized IoT attacks mandate updated approaches and how to maximize ROI while scaling defenses.

HOST

00:00

I will be handling all of the housekeeping and making sure that everything runs smoothly today.

00:24

Now I’d like to introduce our speakers for today. Don Shin from A10, Amritam Putantunda from Xia and TAKA from A10 Networks, who will present Rethinking DDoS: Stronger Defenses that Make Economic Sense. Take it away guys.

DON

00:43

All right. Hey, perfect. Perfect. Thanks a lot, Alex. All right. So thanks everybody for joining this session. We’ve got a lot of information to share with you guys and the agenda here is that we’ll start with a little bit of market backgrounder to sort of set the stage of how to improve your DDoS defenses and how to leverage testing as a method in order to be able to improve your decision making on your defense methods. Then at the end will show you a demonstration of a methodology that IXIA has put together for being able to test DDoS defenses and some results when they applied that methodology to the A10 platform.

01:29

So first off, I think that it’s pretty clear and the fact that you guys have joined this session, you would agree that there is a systemic problem in our industry around DDoS attacks.

01:39

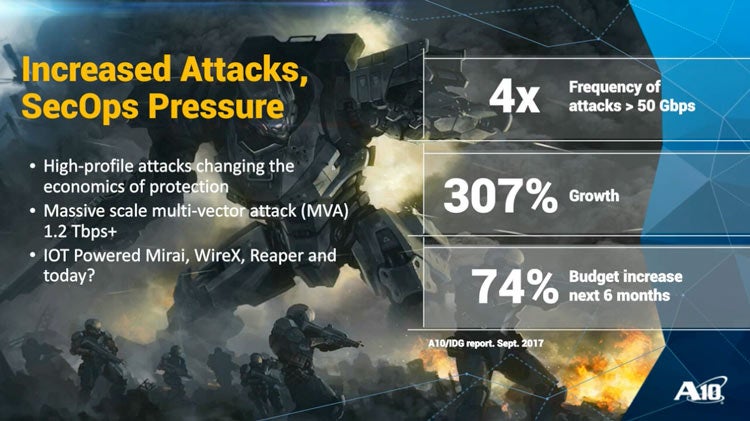

Right? Recently we did a survey with IDG, with your counterparts, and these are the results that came back from that that there was four times increase in large DDoS attacks greater than 50 gigabits per second, with a frequency increase of 307%, which is astounding, right?

02:01

And as a result of this, it’s clear that businesses are on the cycle of spending and investing more on DDoS defenses.

02:11

And this particular survey indicated that 74% of businesses are planning to increase their spend for DDoS defenses And so part of what we want to show here is to capture what does the landscape look like today from your defenses? And what are some of the better approaches that you can take?

02:33

And then once again, how can you verify that by using testing as a strategy in order to be able to do that?

02:39

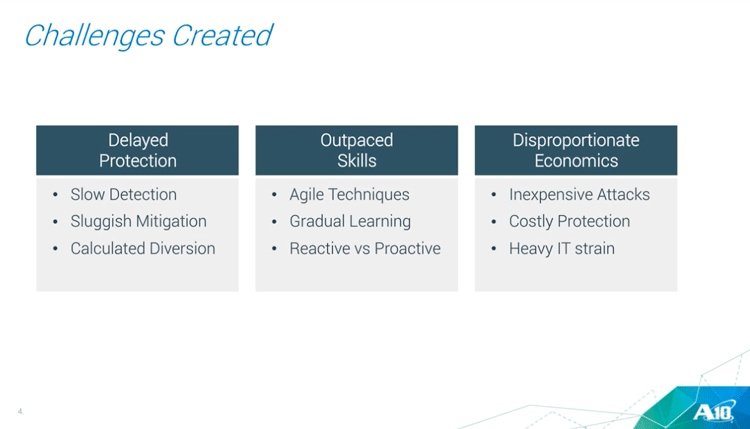

And so you know, as a result of this, as people are making investments, they’re looking at their existing DDoS defense strategies and certain clear things are coming out.

02:50

One is that the the detection speed and the sluggishness of the DDoS mitigation, and you know that diversions are really becoming a problem.

03:04

The way that businesses are building agility into their system, their DDoS defenses are not keeping up with that.

03:10

And fundamentally there’s an economic a problem that we have to figure out how to overcome because the fact that DDoS attacks are very inexpensive and yet defenses are very costly, right?

03:24

And tend to put quite a drain into an organization. So I thought we take a look at one example of this, this proportionateness in this world that we live in today, right?

03:36

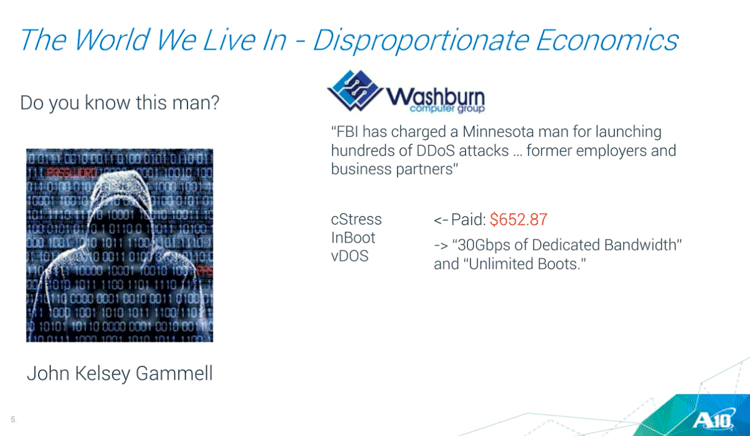

And so I looked at a recent article and I thought, this is pretty interesting. So question, do you know this man? So his name is John Kelsey Gamble, good looking man, you know, business type person and whatnot.

03:49

But sort of, what’s interesting is the FBI charged this Minnesota man for launching hundreds of DDoS attacks against his former employer and his business partners.

04:00

So you know, that’s pretty unusual in itself, in fact, that there’s an attribution to who’s doing these kinds of attacks, but it’s just the average business people also.

04:10

But more interesting to me about what this particular story talked about was that he contacted these three stressing companies or DDoS launching companies, and he paid $652.87.

04:30

And for that amount of money, what he got in exchange was 300 gigabits of dedicated bandwidth with unlimited usage.

04:37

And he did this for three straight months. And so once he had accomplished his first DDoS attack against this Washburn Computer Group, then he turned around and started launching the tasks against a variety of different organizations.

04:52

And of course, that’s sort of the boys and their toys kind of thing, right? Once they have those capabilities, they’re going to start to use it.

04:59

And so this shows, you know, the the environment that exists and sort of why DDoS has become such a systemic problem and it’s so easy for nefarious actors to be able to launch these against you. And so, as you’re evaluating your decisions on how to improve your DDoS defenses, I think that there’s four really important considerations that you need to consider in defining what is an effective defense strategy, right?

05:33

And these four, I think can be broken down into these categories, which to a large extent, you could also translate this to what makes one DDOS defenses solution better than another.

05:45

And so one element of it has to do with precision, and we’ll go into a lot more detail associated with these. But even when we talk about precision, there’s a business impact associated with it, which has all to do with detection errors. Because whenever there’s an error, there’s a cost.

06:04

Part of the cost, there’s going to be your your response teams having to carve out of their busy day in order to be able to chase after nonexistent events or or an actual attack leaked through because the DDoS defenses was too leaky.

06:23

Then there’s an element of scalability, especially given the environment we have around attacks around IoT and the capability that the attackers have.

06:33

You really have to figure out what is the optimal weight to scale in the near term as well as protect those investments in the long term.

06:42

And then we talked a little bit about your frontline defenders, right? The IT people and security people are just overburdened and and you need tools that are going to make them more effective, that leverages automation and those capabilities.

07:00

And then lastly, this is just a systemic problem in the industry, is that overall the legacy DDoS defenses systems are just too expensive, and to be able to build adequate defenses cost quite a bit of money.

07:14

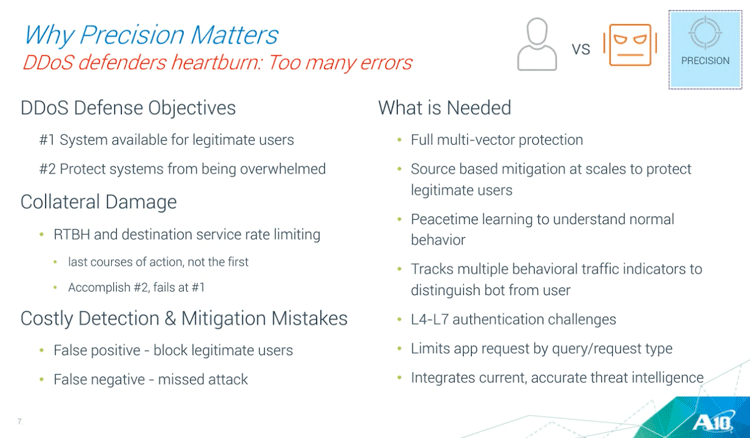

So let’s first take a look at the first one. So why does precision matter? And when you talk to DDoS defenders, these frontline DDoS defenders, this is a common common point that they’re always saying this is sort of their heart burning, that there’s just too many errors.

07:30

But if we consider these errors, we need to sort of step back and think about what is the objective of a DDOS defenses. And I think that there’s really two objectives here, and we need to be clear about which one is more important than the other.

07:45

They’re equally important. But the first one is that that DDoS, it’s a denial service, you’re trying to protect your system for legitimate users, for the legitimate users to be able to access the system.

07:58

And then there is a second portion of it, which is, how do you protect your system from being overwhelmed? And you should always care about the first portion of it first. Okay, and why is that important?

08:10

For example? In DDoS there’s just a lot of collateral damage that happens when the DDoS attack occurs, and some of the collateral damage is self inflicted based on the strategies of how legacy defenses work. You know, for example, like remote trigger, black holing or destination service rate limiting, you know, and traffic shaping, these things should be the last course of action, not the first, because they’re indiscriminate and they will accomplish the second priority, which is protecting your system from falling over.

08:43

But it fails on the first one because of the collateral damage that you’re doing. Because once you trigger a blackhole, everything goes away, right?

08:52

And in effect, you’re helping to accomplish the attacker’s objectives. And these precision errors that occur in the industry, we refer to them as false positives, which is blocking legitimate users, and false negatives, which are these attacks that are missed because the system is too leaky.

09:11

And so what’s needed for these kinds of DDoS defenses that you need? For this coverage, you have to have full multi-vector protection.

09:17

That means through the volume meter through the network, through the application, including slow and low types of sophisticated DDoS attacks.

09:25

Your mitigation solution should be very source based. It shouldn’t be destination based. It should be source based. The ability to be able to surgically differentiate between an attacker and a legitimate user and only block the attacker.

09:41

And you do that by using Peacetime learning so that you know that your environment is unique and has unique characteristics. And the tool itself needs to be able to know how that behavior, and so that it uses multiple indicators or multiple tracking capabilities of behavioral characteristics that go way beyond just simple BPS or or PPS kind of characteristics to be able to distinguish bots from users, right?

10:10

And then there’s that whole slew of countermeasure capabilities from layer 4 through layer 7, through authentication or through query or request limiting.

10:20

And then there’s another important element in DDoS defenses that are not leveraged nearly enough today, and that’s a threat intelligence.

10:29

If you think about the criminals in your, you know, in your cities and whatnot, you know, there’s a repeating offense characteristics.

10:40

So there’s the picture logs of criminals and their records. And so the police have that to be able to identify repeat offenders.

10:51

And that’s the same thing for DDoS. And today most companies are not leveraging threat intelligence, not incorporating them into their DDoS defenses because DDoS attackers use botnets and they’re repeat offenders on on how they how they use their tools.

11:11

So one of the things that we talked about was this collateral damage. So this particular slide is a little complicated and very technical.

11:18

But here here’s a list of some of the strategies for counter measures when you’re under a DDoS attack and on the left, what we’re showing is that when you go from the top to the bottom, is it that the the top ones have very high impact for valid users as well as to attacking agents because you know, like we talked about the remote trigger black hole, or traffic shaping for a service and the bottom ones have very little impact to the legitimate users because if DDoS attackers use of odd looking packets in order to knock over a firewall or something, but but there that would not be a normal behavior from a legitimate user.

12:04

What you see here is that so the top are lots of collateral damage. The bottom is no collateral damage.

12:13

But the sophistication of attacks today play mostly in this blue range here, which is trying to use source based behavioral characteristics in order to minimize, to be able to surgically differentiate between a bot and an attacking agent.

12:30

And and that’s what strong DDoS defenses solutions should have. And at A10 we make a product that’s called Thunder TPS, and that’s what it does.

12:39

It tracks these behaviors, has that full suite of mitigation or countermeasure capabilities, but really has strong capabilities in the middle area of being able to track behavioral elements of attacking agents.

12:54

Okay, so now we talked about that, that portion. Now let’s talk about the workflow, right? So another common complaint that you hear from frontline defenders is that the DDoS defenses require continuous manual intervention.

13:12

And no organization has unlimited resources, and you’re tying up your people from doing more valuable work under attack.

13:20

And so what’s what’s needed is to have mechanisms that have automation built into them so that you can do things based around policies and the tool will escalate as you go in.

13:36

And I showed that earlier that not all mitigations are the same, right?

13:41

There’s certain types that are very system focus, others that are user focused. And so you want that mechanism to be able to step through that.

13:52

And the other portion of it is that our modern IT organizations have really embraced orchestration and automation in order to speed up the deployment and optimization of their networks and DDoS defenses.

14:07

And so you need this RESTful API capability to be able to control all of these. Because at the end, you know, people are money.

14:16

People and time are critical. That’s really the critical asset that any organization has is their people. And so what’s needed is a mechanism similar to what A10 provides with our five layer policy-based mitigation escalation.

14:31

Here we have five levels, and so at peace time we’re doing learning. And then once we are attracting these 27 plus indicators to look for anomalous behavior, once that trip, then it’s going to escalate to level 1.

14:46

Start to apply some, you know, the lower level kinds of mitigations that are not so draconian are so aggressive.

14:56

But as as the attack gets worse and worse, once we get to a level 3 or level 4, we’re going to start to apply those those limiting kinds of things in order to make sure that the system doesn’t fall over.

15:09

But the point is to first work on protecting your users. Only when the system is going to fall over is when you start to have to start using very aggressive techniques.

15:19

And this should all be automated because response time is important in DDoS attacks and people touching tools slow down the response.

15:29

Now there’s another element that we talked about earlier, also, which is a scalability. And scalability in the modern world, especially around the IoT bots, has been more characteristic than what we traditionally think of as far as the data rates and packet rates.

15:47

Because because the DDoS attack landscape has changed and one of the things that you see or you hear from companies is that, wow, if we have to give to these kinds of scale up and scale out, it becomes very expensive with legacy DDoS defense.

16:03

And so what’s changed, right? And it’s really around last year with the DDoS of things is really growing right?

16:15

And what changed was one the obvious thing was the intensity of the attacks, you know, such as the attack on Dyn with 1.2 tera bits peaking.

16:24

But there’s more to it than that, right? It’s the with also the DDoS attacks. You know, the IoT are spread all around the world.

16:32

They’re geographically diverse and there is millions of of vulnerable IoT devices that a bot herder has access to be able to leverage.

16:44

And then of course, because of the fact that these are IoT devices that weren’t filled with the software update capabilities, these are persistent devices that can be leveraged at any time. And even after you reset it, they’re still re exploitable and people, and they’re taking advantage of that.

17:02

Now. There’s another caveat to this, as far as a sophistication that’s available because of the fact that because these are IoT devices, the malware writers don’t have to hide in the kernel or anything like that.

17:16

They get to leverage the full Linux stack networking capabilities, which make these devices even harder to be able to defend against.

17:24

And you need that surgical capability as a result of that. And these statistics are around. You know what are the attacking agents?

17:32

This year was really the crossover point where now there are more Linux based botnets and there are Windows based infected systems.

17:42

And so according to Kaspersky, that last quarter that 70 percent of the attack, nearly 70 percent of the DDoS attacks, were created from Linux botnets, which means that cloud is being leveraged and IoT especially is being leveraged as agents for DDoS attacks.

17:58

And so within our product portfolio, we addressed the scalability from the throughput capabilities as well as being able to protect wider attacking agents.

18:11

So you know, in this example we’re showing is that we can track 128 million sessions at a time and our threat intelligence database, which we provide with the threat intelligence of known bad actors and you know which bots have been attacking in the last two hours as an example.

18:30

And the size of this is able to do this at internet scale so that we can incorporate those open resolvers into the threat intelligence portion of it for blocking as well as the the bots, such as the Reaper bot, that’s been spreading in the wild recently that looks like it’s going to be used in some nefarious act. But the width becomes pretty critical for something like that.

18:56

But now, at the end of the day, especially when we’re talking about DDoS it all comes down to money.

19:03

And and you know for companies to be able to build adequate DDoS defenses, to have the ratio of mitigation capabilities to their total internet type, becomes very expensive with legacy solutions.

19:18

So what here I wanted to show you a comparison of the three manufacturers, A10, Arbor and Radware, and these are the flagship products that each of these companies are in the market with.

19:33

And so as you read across the line here, each one shows what size are they? How much bandwidth can they handle from DDoS protection? What is a packet rates that they can do? And then down at the bottom what we’re showing you a comparison between what is A10 14045 device? How many do you need in order to be able to match up to the bandwidth from Arbor or from Radware?

19:58

And then a pictorial representation, how many RU units or rack units do you need in order to be able to facilitate the equivalents?

20:06

And so the point here is that they’re not the same right? And and these have a very big cost implication for especially for large service providers that are building you know huge networks or large businesses that have huge networks in in the number of boxes that you’re going to need and which has a dramatic impact on the total cost, right?

20:30

And managing these complex units or multi racks of of units in order to be able to get to the DDoS defenses in order to be ready for these attacks from the DDoS of things.

20:44

And that’s where you know. The important portion of what we want to share with you guys is that when you’re making these investment decisions, there’s more than just looking at data sheets or you know what you’ve used in the past and, you know, being stuck and continue to invest in a solution that may be inferior, right?

21:07

And so our friends at IXIA, they came up with a DDoS testing methodology. A vendor neutral DDoS testing methodology and they invited us to provide equipment for them to be able to go through the process of the methodology so that it would extract data for companies like yourselves to be able to make a more informed decision.

21:34

And so I want to pack this thing over to Amritam for him to talk through the methodology and give you some more insights.

AMRITAM

21:43

Thanks a lot Don. Thank you all for joining. So when we were creating the DDoS test methodology, as Don showed earlier, there are several types of mitigation techniques.

21:57

So for us from the test perspective, it also became complex because we have to create test cases that cover this whole spectrum of different types of DDoS is that can actually expose a solution or its potential vulnerability against a particular type of DDoS variant.

22:16

And that is, those are some of the things that I want to cover in this in these next few slides where I will be going through the dilemma of a test tool in terms of creating a comprehensive test methodology … what kind of hardware or what kind of challenges we faced.

22:32

And I will also give a small insight of the of the some of the DDoS test cases itself.

22:38

Of course I cannot go through the whole methodology itself and and the test report, so I encourage you to download it and go through all the graphic details that it covers.

22:50

First of all, when we were testing DDoS, the biggest challenge itself is the evolution of DDoS.

22:56

Back then, when we got introduced to security and DDoSes, most of the DDoS was trying to attack one and only one thing … that was a bandwidth, to somehow try to over subscribe the bandwidth and and create disruptions to normal services.

23:14

But as as the years came by, an Internet of things happened and the CPU and the processors started increasingly becoming more and more sophisticated.

23:27

And so were the applications and people realized that there are other ways to actually leverage DDoSes. So then then came the memory so other resources, they targeted other resources but which was not the obvious resource.

23:40

And then came the application based DDoSes that actually targeted some of the vulnerabilities or some of the way the application work itself and create huge DDoS floods which would be much more difficult to stop because you cannot really stop the actual application traffic, right?

23:59

But then again, how do you detect a DDoS that looks very, very similar to application traffic.

24:06

The biggest challenge from the test perspective was of course to mix everything together and create create a DDoS methodology that has all of these running together and let me explain you why that can be difficult. So as explained, we need throughput and memory. Both has to be a leverage.

24:26

So we have to have a volumetric attack and we also have to target map session, max number of sessions.

24:32

So we have to create concurrent sessions, have to have those sessions being active in the test tool itself.

24:39

At the same time we also have to have different applications and application DDoSes so that we need to have the granaries of application traffic. The integrated behavior of the application traffic to create a proper application DDoS. We also have to have the scalability, we have to have like in Miri we saw millions and millions of IP addresses or endpoints generating small amount of traffic.

25:06

But when they came together that became a flood up to 600 gig. So we have to simulate this huge amount of IPv4 and IPv6 endpoints.

25:15

The most important part is that all these DDoSes counts for nothing if we do not have realistic application mixes. It’s very easy to mitigate DDoSes if they are running inside.

25:25

You just have to block everything. But that’s not, that’s not how it works in real world right?

25:30

Application mixes. The real world applications that throw for the usual users and subscribers should have absolutely spot free access to the traffic and should be able to do whatever they are doing while mitigation tool block 100, 200, 300, whatever gigs of DDoSes and millions of sessions that are coming on millions of hostile board sessions that are coming up.

25:57

So for for all this to happen, we have to have a hardware and for that we selected our storm hardware.

26:08

The reason to select it is that because we needed to create both throughput and scale volumetric traffic and that’s where we need a strong FPGA. Similarly, for applications, application traffic and the intricacies of application traffic is generated by a network processor.

26:23

So we needed a bigger network processor and bigger FPGA and very big pipe. So we had our newest clouds from under the solution where we ran some, created some of those DDoS tests and ran it through them. I wanted to go through in very, very brief details of some of the DDoSes that we added. As I spoke in the first slide that we have to have all different variants of DDoS. We cannot cover all the DDoSes that exist, but we need to have the check boxes so that we have both volumetric memory, volume throughput and an application DDoS.

27:03

So for that we started with UDP fragmentation where we just create different sizes of UDP packet, but we intentionally fragmented it.

27:13

So for a DDoS mitigation tool it becomes tougher because it has to, either re segmented or understand and identify and stop in any kind of fragmentation.

27:23

We also created different types of TCP floods which are very common, very common distractions, I would say.

27:29

So we created a Christmas tree package which is basically a TCP packet with PIN push are added together. To increase the complexity even more we added some data bytes into this package so that it looks like real TCP transaction.

27:45

Apart from that, we also added the Syn flood, which is one of the most common TCP attacks where we have. We send millions and millions of Syn packets to ran out any resources, any layer for capabilities, resources.

27:59

We also added a Syn … so there were a lot of volumetric traffic that was added. So Syn again is where it’s a package where both the Syn and the Syn flags are enabled to get up.

28:09

This used to be very very effective volumetric DDoS and have been in past known to bring down systems, and these are very simple as well. You know there is not a lot of complexities in them. But of course we have to have all the different variants. So we also added some application based attacks.

28:27

And you know one of the most common ones of course is DNS. How we have multiple instances where DNS service, if it can be brought down, you know the entire website or certain infrastructure part of the website can be brought down. That’s the reason this service is heavily targeted by DDoS solutions.

28:47

You know you literally don’t have internet. Technically you will be out of internet if DNS service goes down. So of course we try to have different variants of DNSs.

28:58

We try to create malformed DNS packets and DNS query floods were basically the clients are sending billions and billions of queries towards the victim server and also set certain balls from DNS queries.

29:11

Apart from that in this particular scenario where the methodology was created targeting a webserver server. So of course it made sense to have a lot of HTTP based attacks and one of those attacks was a post.

29:25

In this attack we were just trying to upload a certain amount of random large files into the server, you know just to overload the server with a lot of bandwidth and throughput and so that it cannot scale.

29:40

We also have the low and slow DDoS attacks we were sending incomplete headers. A few millions of them are trying to trying to suck up every resource that are there, that gets busy so that the actual legitimate responses are not are getting blocked.

29:58

So you can see if you see this methodology. This is how it was supposed to work in the tool to work so it should.

30:05

It should be blocking all the http slow loris which were marked by the red line.

30:10

The legitimate response goes through. Similarly, we also have NTP Flood. NPT Flood has become very common. There are certain vulnerabilities in the NTP network time protocol which has been exploited over and over upon for DDoSing purposes. So we created NTP Flood where the attackers here pretending to be NTP servers are actually sending an NTP response. Huge number of NTP responses to the victim. So not every attack that we had here made sense for a web server.

30:42

But the reason we had different combinations was to create a comprehensive variant to understand if the mitigation tool is indeed capable of blocking everything and has the right strategies to block different variants of attack, even though some of them might not make sense for the victim that they are protecting.

31:04

This is where we created some randomization. So we do have very realistic simulation of Mirai, the botnet and CNC Communications that is followed by the DDoS attack and we went through and created something which was unique.

31:18

Created our own floods which were which preceded a DNS, a Mirai compromise and we created some floods which are which are relevant to this particular network like DNS, HTTP flood, but also some other floods which may not be relevant to this particular server because it’s HTTP server, but it still is a very very effective DDoS attack like you know, ball source squaring engine.

31:49

So that’s a very nice attack. And every time we ran the test just to have the random factor going started certain random variations of attacks like you know, in DNS Flood we were changing the queries from A to AAAA query.

32:07

So things like that to create some random variants and understand that it’s not, it’s not something that is highly optimized to protect only certain types of attacks while while if things change it starts falling.

32:22

So that was in our mind while creating this DDoS attack. So ultimately, which was, which very, a very pleasurable experience, at least for me to see, was to combine everything together and create this huge flood of attack that included the excessive slow loris, the Mirai with all this 5 variants, the network time protocol attacks, the amplification of the DNS queries, and those volumetric, everything coming together.

32:51

But at the same time it’s mixed with those legitimate responses. So for a mitigation tool it has to apply all, all its techniques at the same time and ensure that the all these techniques are not hampering the legitimate costs because the legitimate requests will still have DNS, HTTP, HTTPS request and responses and that should be unaffected while this whole big 300 gigs of attack is blocked.

33:20

Now of course you will see if you go through those details. You will see some of these attacks, how it happened, how we configure those attacks and things like that with graphic details.

33:33

What we did also was to see individual mitigation. So banked all these attacks individually like UDP fragmentation and NTP flood and things like that and we saw mitigation efficiencies.

33:44

We measured enough granularity of nanoseconds to see if they are impacts, if there are slowdowns and overall resiliency was there. The big spike that you see at the end of this test because this is of course a test case that ran for hours and hours.

33:59

The big spike that you see is where every DDoS attack that were there were combined together and we really stressed the systems, the system to its health and see if is able to simultaneously apply all the DDoS mitigation techniques to to block this massive massive flood of DDoS attack.

34:20

Now I wanted to briefly talk about the hardware that we use for this exercise. We use our Cloud Storm.

34:26

This is our new hundred gig platform that is that has a strong network processor, onboard encryption and a stronger SPGS.

34:35

So to send applications, throughput, layer 2/3 types of traffic all simultaneously together which led us to create this which helped us to create this 300 gigs of DDoS that is along with up to 60-70 million connections.

34:52

To ensure that both memory and so put a leverage, we use Breaking Point as a software here.

34:59

It’s one of the very well known software security test solution which has 38,000 plus attacks and malware, different DDoS variants.

35:08

Of course we used only 14 or 15 variants but you know depending on the situation you can have all the variants together. One of the important things also was that we mixed these realistic applications with it and for the reports we had nanosecond granularity of reporting.

35:23

For the hardware we used three clouds cards to generate the 300 gig traffic towards the victim. The victim and the clients, and that everything was simulated by clouds.

35:37

With that I want to pass it over to TAKA who was my counterpart in this exercise and he can talk about the A10’s part.

TAKA

35:46

Thanks, Amritam. So the DDoS mitigation device used for this test was A10’s flagship model Thunder 14045 TPS, which has a industry leading performance, for example 300 gbps, the bandwith, and 440 Mpps performance. As Don explaned before it provides surgical DDoS protection against multi-vector DDoS attacks.

36:13

Let’s take a look on the test. IXIA is simulating crim and attackers and service.

36:21

A10 Thunder TPS is pressed in part between Untrusted Zone and Trusted Zone. For the always on protection.

36:30

TPS provide DDoS protection and mitigation on the same box in this test case. On the left, lifting up all the time attack profiles and legitimate user traffic, which is used for this test.

36:43

Since Mirai attack and Dyn DNS DDoS have been multi attack vectors for 15 attack vectors competed for this test.

36:53

As Amritam mentioned we test new test first and then finally we test all the attacks at the last.

37:01

So today we were showing some test record video for the multi vector attack with full attack spectrum.

DON

37:09

So we’ll have Amritam back and start with the breaking point section.

AMRITAM

37:16

So. this is This is literally a 2 hour plus attack, but we wanted to show a small snapshot for this particular webinar.

37:23

What you see and if you can see the bandwidth section, this is the 5 gigs of realistic application traffic that is being sent.

37:30

Ideally for all the DDoS attacks this traffic should remain undisturbed. And what I’m showing here is where we created all the DDoS vectors all together.

37:39

So now you see the bandwidth going up 75 to 55 gig. It should read somewhere around 300 gig.

37:46

That was the whole, all those DDoS vectors that I talked about together coming towards the server and you can see some of these flows that we created, the Mirai flows that I talked about, the HTTP Slow Loris, UDP fragmentation, NTP, things like that and you can see all of these writings so you can.

38:03

You probably will see some of those attacks actually getting successful. It’s from the client perspective, which it is successful because it’s able to send the packets.

38:11

But ultimately when you see the reports and that’s what you will see if the packets are actually the victim interface or not, and you will understand whether the mitigation tool was successful.

38:21

So let’s also see the TCP floods. As I showed we had multiple TCP floods so you were literally sending 26 million TCP connections per second and by the success rate you can easily understand that A10 is able to mitigate most of it.

38:37

So this is the interface here, the attack. Once the attack goes down, even when the attack is there, you can see the RX of 5 gig. That’s very important. That basically signifies that out of the six interfaces that were sending traffic, the RX potion itself remaining at 5 gig means that all the attack got mitigated and the realistic traffic was not impacted and the server was able to service all the clients that were there at that point of time so that the attacks coming down and the system going back at 5 gig.

39:11

So this is just a small small snippet. You can see the failed portion as well, and this is from the statistic.

39:19

But ideally when you go to the report, that’s where you’ll get the granularity of DDoS attacks and what happened.

39:26

What was the actual impact? If there was any latency on HTTP possessions and things like that, which are the attacks that generated the most throughput, which are the attacks that targeted the memory and things like that?

39:38

With that I would pass it over to to TAKA about the A10 perspective of attacks.

TAKA

39:45

Okay, thanks, so let me quickly go over test from from the Thunder TPS side.

39:52

We are showing the traffic chart on each protect certificate, for example HTTP, DNS, and TCP/UDP and other ports.

40:00

So you see all drop traffic showing red and all the past traffic, quality traffic in green.

40:07

So here’s another summary chart that’s including some session count. You can see on the top right.

40:13

So here the system health on the TPS box, on the CPU usage on the bottom right, you can see the maximum CPU usage like 50 percent or so.

40:25

Now moving to the mitigation console, providing live DDoS dashboard. As you see green, the green, the chart that is actually indicating live traffic or latent traffic on the right hand side is providing the DDoS count.

40:41

You can see [inaudible] attack and [inaudible] attack.

40:45

Now we can provide also the detail [inaudible] on the HTTP service. On the right hand side you can see some contact [inaudible] contact for [inaudible] authentication, HTTP authentication service [inaudible] actually indicating the protection against [inaudible] attack, [inaudible] attack and Slow Loris attacks.

41:05

If this page also provides more detail on the attacks, you see the top of it against the packet rate or [inaudible] or packet drop rate.

41:14

Those kind of details are available on the mitigation console. Now moving to the DNS service port, you see the majority of traffic in showing red, which means successfully drop.

41:28

On the right hand side you see some counter for the service limit and DNS authentication and DNS [inaudible] it starts actually indicating the protection against DNS flood attack.

41:39

Also the DNS [inaudible] attack. It’s also probably providing some statistics against the amplification attack indicating NTP or DNS on that right bottom.

41:49

Now I’m moving to TCP service port, one actually show in the protection against the Mirai attack, including common [inaudible] connection.

42:00

Next one is a UDP. This is showing the result from against the fragmentation attack. Alas this is showing that somebody logged the DDoS from the TPS as you see on top from the top flood attack and [inaudible] attack and [inaudible] attack and also several fragmented [inaudible] attack.

42:20

And finally we can show we can see DNS malformed [inaudible] attack so TPS successfully detected and mitigated all attacks.

42:30

In summary, as we see TPS successfully defend over 300 Gbps attack traffic and also [inaudible] traffic and due to its performance and action design including how do you access capability?

42:45

For example for the [inaudible] attack or Christmas Tree Flood attack, TPS only utilize up to 60 percent CPU all the time while having all 300 Gbps attack traffic. With Thunder TPS DDoS protection, all 15 attack vectors successfully mitigate. And more importantly there’s no new traffic interruption to the legitimate user traffic. So these concludes our test recording and test attack.

DON

43:17

Yeah, fantastic guys. Now for all you guys I know that’s a lot of information to take in, right?

43:24

But at the end, this is really … how can we help you? You know in this thread environment with DDoS.

43:31

And you know, especially these investment decisions sometimes are very expensive and you need some real data in order to do that.

43:40

And so we’re very excited that IXIA has published as DDoS methodology. These are the assets that are available for you to be able to download as well. https://www.a10networks.com/wp-content/uploads/A10-TPS-WP-Testing_DDoS_Defense_Effectiveness_at_300_Gbps_Scale_and_Beyond-1.pdf

43:49

I think off of this session so hopefully you gather some information. Please do take a look at this and consider this as you make your decision.

44:02

We think it’s a great way to be able to do head-to-head analysis of when you’re going to make a DDoS defence investment, or really is to consider, are your defenses adequate today? And and testing is a wonderful way to be able to do that.

44:21

All right. So it looks like we have a little bit of time to take some questions.

44:25

If Alexander … Alex?

HOST

44:33

Yes, so sorry I was on mute and I could not get off. As a reminder of everybody, the questions panel is located on the left hand side of your screen, so go ahead and add some questions in there.

44:45

And I do see that we have one question and it and I think this is a question for IXIA but I’m not sure … what is your criteria to select the DDoSes is that you put in the methodology?

AMRITAM

45:01

Thanks Alex. I will take that.

45:04

So basically what when we created DDoSes, as I said, we had around 40 plus DDoS attacks to choose from, but we wanted to create a variation that would include volumetric [inaudible] volumetric memory application based.

45:20

So this … and some MIrai like the botnet attacks. So that was the criteria where we wanted to pick some from every end of the spectrum to have a very comprehensive coverage.

DON

45:33

That was a good question. Thank you.

HOST

45:36

Awesome. And I think this one may be for you A10, Don.

45:40

DNS is our biggest problem. What does? What does it do to predict DNS servers?

DON

45:50

Okay, well yeah, I think that really is an important portion because you know, as we know, DNS is what makes the internet work.

45:57

I think there’s really two parts to that question, and so maybe we can try to address both sides.

46:02

As you know, one of the problems with DNS that… and as recent topic about is, is DNS infrastructure being used as a mechanism for, you know, using reflection attacks, which generate a lot of volume, right?

46:16

And that’s definitely a problem. But for many service providers, they’re also offering DNS services. So the DNS servers are being attacked as a mechanism to be able to shut off downstream customers from the service providers, right?

46:32

And so the thing that you have to look for, especially for in DNS, is because the attack strategies are changing so much.

46:39

In the past, you know as these attacks were being used against DNS servers, you would make these queries over and over again to the servers.

46:49

And so then you know the technology has evolved where now many of the DNS servers have request rate limiting so that they know that why is this particular agent asking for the same thing over and over again?

47:03

And so you know now there’s these attackers use the DGAs or domain generation algorithms that are are creating that ability to be able to continue to query but they send it for different domain names or these random domain names.

47:22

And so as for DNS defenses, these things are quite complex and what has to happen is in addition to doing those you know high level things of request rate limiting, you actually need some deep packet inspection capabilities to really understand the queries around the (Fully qualified domain name ) FQDN, every element of the FQDN.

47:45

And so yeah, absolutely within the A10 Thunder TPS, it’s actually a very good, powerful solution around DNS because of the fact that we’re going very far into the query.

47:57

We’re not just limiting query requests. We’re actually looking at the FQDN, the request type and applying policies in order to be able to provide that resilience for DNS services.

HOTS

48:15

Awesome. I think this is another one for IXIA. We saw in the video the RX traffic going up slightly once the DDoS attack started. Does that mean the victim did get affected? Partially?

AMRITAM

48:36

That’s another very good question. What what I would… when when we started generating the traffic, right? The DDoS traffic, what I think, and probably Pat, can come in as well, I think, is that A10 has different types of challenge mechanisms and those challenges were coming at us.

48:59

So from the client side, from the attacker side, we’re registering those are RX traffic or receive traffic.

49:06

And when we actually dig in and went into the report, we saw that from the server side at least none of the traffic, none of those responses were coming in because none of the DDoS requests got in. So I believe there was the mitigation tools had different challenge mechanisms for count the several millions of DDoS attacks we sent, and that’s where we saw a bump in the RX traffic from the client’s perspective.

TAKA

49:38

Hey let me Chime in. So that after your understanding is correct. So on the Thunder TPS we have multiple challenge or use the authentication mechanism.

49:46

It doesn’t really the authenticating user, but from the source IP perspective we are just trying to make sure this is a valid user or not.

49:54

And some of the challenge actually we are sending back some challenge like a Syn cookie is one of the example.

50:01

We have those kind of mechanisms including for the DNS or other protocols too.

HOST

50:11

Awesome. It looks like we have another question here. Does defender TPS appliance have a fail open functionality for production traffic interfaces when the appliance fails?

DON

50:26

Let me take a shot at a portion of that, and then TAKA you can jump in as well.

50:31

So there’s the answer to it is that in some of our appliances we do have hardware failover in the traditional sense of what people think about L2 inline kind of things.

50:44

But but in addition to that, the other portion that maybe was missed was when TAKA was talking about that in path capabilities, the TPS has integration both at layer 2 and layer 3, right? So there’s all the routing protocols built inside of it.

51:01

So you know from a redundancy capabilities and from, you know, being able to change the maintenance and things like that, that layer 3 integration becomes a pretty powerful way to be able to do that as well.

51:15

And I think … and most people think of inline and L2, right? But you know, I think you should also consider L3 in path because that actually has quite a bit more flexibility. Is there something else to say about that?

TAKA

51:30

No, actually you are right. So having Layer 3 dynamic login [inaudible] capability, we can work around those kinds scenario using login protocols, especially for the big architecture topology.

51:46

If you’re considering a small, the enterprise use case may be able to impact for in line will be a ideal solution.

DON

51:56

Awesome. Well thank you everybody and thank you to our star presenters. As a reminder, we have included the resources which you should see on the left hand side of your screen.

HOST

52:13

Thanks to everybody who joined us today and thanks to everybody who made this webinar happen. You will receive an email with the on demand webinar shortly. As a reminder, if you have any questions, please feel free to reach out to Don, Amritam or TAKA directly.

52:29

Thank you all again for joining us and we look forward to seeing you want another one of our webinars.